Chains are fine for demos. When your AI system needs to make decisions, retry on failure, call tools conditionally, or maintain state across multiple steps — you need something with more structure.

LangGraph is the framework we've moved to for production agent systems. It's lower-level than LangChain's high-level abstractions, which means more code and significantly more control. That trade-off is worth it the moment you're deploying something real users depend on.

What LangGraph actually is

LangGraph models your AI workflow as a directed graph — nodes are processing steps (LLM calls, tool executions, conditional logic), edges define the flow between them. The graph can loop, branch, and maintain persistent state across steps.

The practical difference from a simple chain: LangGraph lets you build agents that can reason, act, observe the result, and reason again — in a controlled loop with explicit state management. You know exactly what state exists at every node and where the system is in its execution at any point.

When to use LangGraph vs simpler approaches

| Scenario | Recommended approach |

|---|---|

| Single LLM call, fixed output | Direct API call — no framework needed |

| Sequential steps, no branching | Simple chain (LangChain LCEL or custom) |

| Conditional routing, tool use | LangGraph — worth the overhead |

| Multi-step reasoning with retries | LangGraph |

| Human-in-the-loop checkpoints | LangGraph — built-in support |

| Long-running tasks, persistent state | LangGraph + LangGraph Platform |

The core concepts you need to understand

State: A typed dictionary that persists across the entire graph execution. Every node reads from and writes to this shared state. Define it explicitly — annotated TypedDict in Python. Sloppy state definitions are the #1 source of bugs in LangGraph systems.

Nodes: Python functions that take state as input and return state updates. Keep nodes small and single-purpose — they're easier to test and easier to replace.

Edges: Define what happens after each node. Conditional edges evaluate state and route to different nodes — this is where the agent's decision-making logic lives.

Checkpointing: LangGraph can persist graph state to a database at each step, enabling pause-and-resume, human review interrupts, and recovery from failures. For anything in production, checkpointing is not optional.

A pattern we use in production: the review-and-act loop

The most reliable pattern we've built with LangGraph is review-and-act: the agent takes an action, observes the result, evaluates whether it succeeded, and either proceeds or retries with an adjusted approach.

Concretely: a document processing agent calls a tool to extract structured data from a PDF. A validation node checks whether the output matches the expected schema. If it passes, the graph continues. If not, the graph routes to a retry node that adjusts the extraction prompt — up to a configured maximum. If retries are exhausted, it routes to a human review queue.

What makes LangGraph valuable here: the entire flow — the state at each step, routing decisions, retry count, final output — is observable, testable, and debuggable. You can replay any execution from any checkpoint.

Human-in-the-loop: the feature most teams overlook

LangGraph has first-class support for interrupting graph execution to wait for human input. The graph pauses at a defined checkpoint, persists its state, and resumes when a human provides a response or approval.

We use this for any AI action that's consequential and hard to reverse — sending emails, writing to production databases, triggering financial transactions. The agent prepares the action, a human reviews and approves, the graph resumes and executes. One wrong approval is recoverable. An agent that autonomously executes 500 wrong database writes is not.

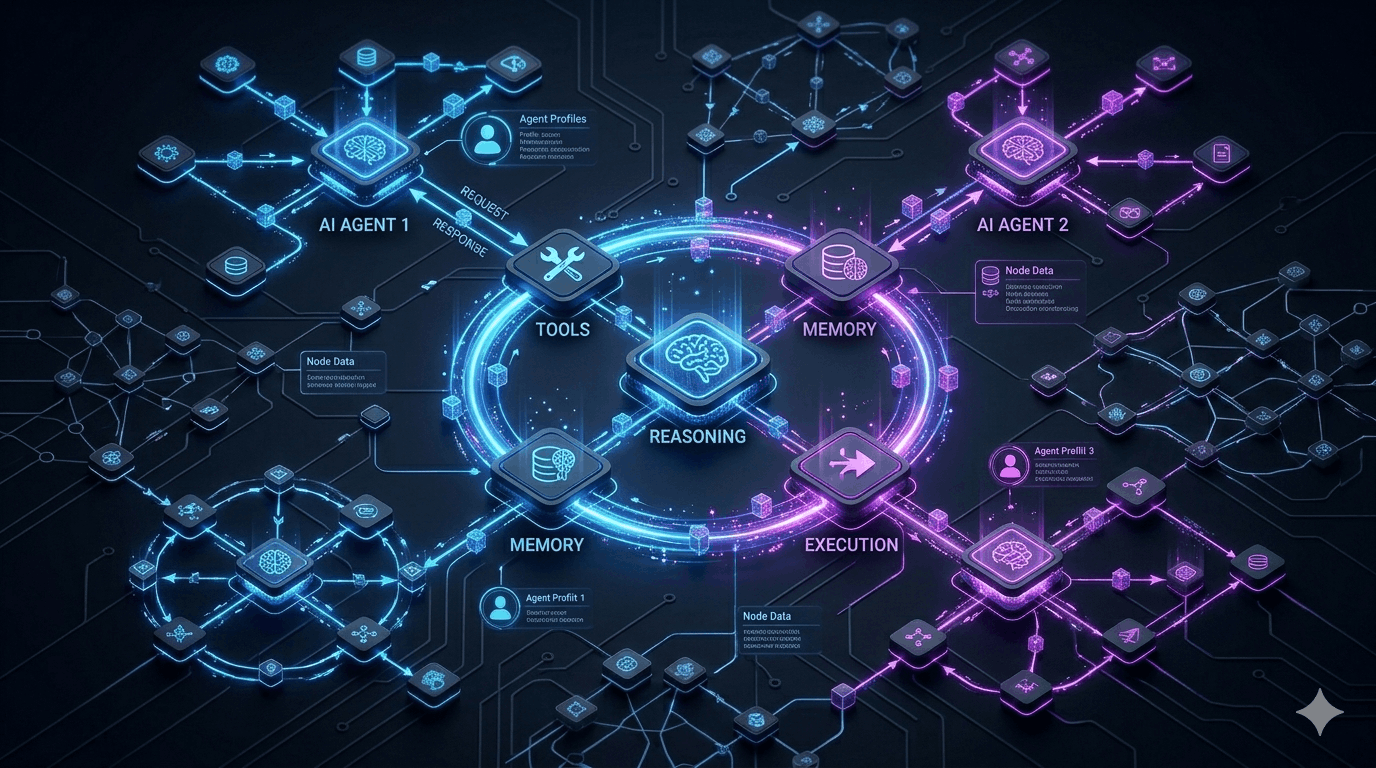

Memory and persistence patterns

Short-term memory (within a session): The graph state itself. For conversational agents, this includes message history. Be deliberate about state size — storing full conversation history of long sessions bloats checkpoints. Implement a sliding window or summarisation strategy.

Long-term memory (across sessions): Information about users and preferences that should survive beyond a single execution. The pattern: at the end of relevant executions, extract and write key facts to a persistent store (a Postgres table works). At the start of new sessions, retrieve relevant memories and inject them into initial state.

Checkpoint backends: Use langgraph-checkpoint-postgres in production. Set a retention policy — old checkpoints accumulate fast and most are never needed after the session ends.

Multi-agent architectures

For complex tasks that benefit from specialisation, LangGraph supports multi-agent patterns — multiple graphs that coordinate to complete a task.

Supervisor pattern: A supervisor agent receives a task, breaks it down, delegates to specialised subgraphs, and synthesises results. Maps well to: researcher agent collects → analyst agent synthesises → writer agent produces → supervisor reviews and either finalises or sends back.

Handoff pattern: Agents pass control to each other explicitly with accumulated state. Cleaner than the supervisor pattern for linear multi-step workflows where each agent has a clear responsibility boundary.

# Supervisor routing

def route_to_agent(state: SupervisorState) -> str:

if not state["research_complete"]: return "researcher"

elif not state["analysis_complete"]: return "analyst"

elif not state["review_passed"]: return "reviewer"

else: return ENDThe key principle: keep communication interfaces between agents narrow and typed. Agents communicate through well-defined state structures, not free-form text.

Streaming agent responses

LangGraph supports streaming at multiple granularities. Token streaming emits tokens as they're generated — wire to your frontend via SSE for the typewriter effect. Node streaming emits events as each node starts and completes, useful for showing progress in multi-step agents. State streaming emits full state after each checkpoint.

Use graph.astream() for async contexts (FastAPI) and graph.stream() for sync. The stream_mode parameter controls granularity: "values" for full state, "updates" for deltas, "messages" for token-level streaming.

Testing LangGraph agents

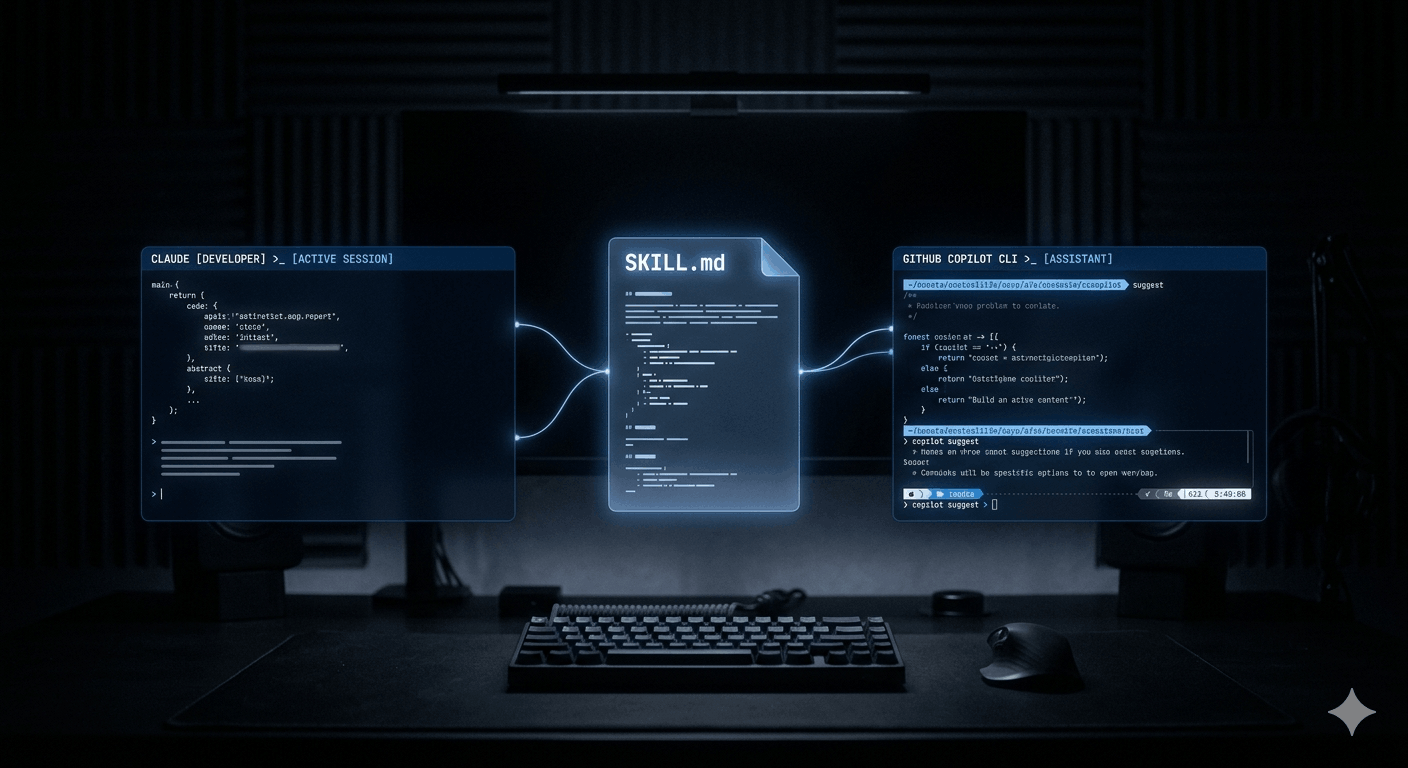

Test nodes in isolation first — they're just functions, and unit testing them is straightforward. Then build integration tests that run the full graph against a golden dataset of inputs with expected state transitions. Check not just the final output but the path taken: did it retry when expected? Did it route to the correct branch?

LangSmith integrates directly with LangGraph and gives you full trace visibility in production — every node execution, every state transition, every LLM call with its inputs and outputs. For production agent systems, this observability is not a nice-to-have.

Deploying with LangGraph Platform

LangGraph Platform handles deployment, scaling, and operational concerns of running persistent agent systems. It provides a REST API over your graph, built-in checkpoint storage, a monitoring UI, and horizontal scaling without infrastructure management.

For self-hosted deployments: FastAPI application wrapping the graph, Postgres for checkpoints, Redis for queue-based coordination, deployed on ECS or Kubernetes. The langgraph-cli generates a Docker-compatible server from your graph definition. Monitor with LangSmith for trace-level visibility.

The honest take

LangGraph has a real learning curve. The state management model is different from how most engineers think about application code, and the graph abstraction takes time to internalise.

The payoff: agent systems that are debuggable, testable, and maintainable by engineers who didn't build them. That's the standard any production system needs to meet — and it's the standard most AI frameworks make surprisingly hard to achieve.