Most AI features ship twice. Once as a demo that impresses everyone in the room. Once as a production system that nobody uses.

The gap between those two moments is where most engineering teams get lost. After integrating AI into production systems across FinTech, HealthTech, and B2B SaaS over the past year, we've developed strong opinions about why features fail — and what the ones that stick actually have in common.

Start with the workflow, not the model

The biggest mistake we see: teams start with "we should add AI" and work backwards to find a use case. The projects that succeed start with a real workflow problem and evaluate whether AI is the right solution.

One client came to us wanting a "ChatGPT for their knowledge base." What they actually needed was a structured search system with AI-powered summarization on top. The distinction matters — it changed the architecture, the UX, and the reliability profile entirely.

Prompt engineering is software engineering

We treat prompts as code. They live in version control, they have tests, they go through review. A prompt that works in a playground often fails in production because real user inputs are messy, ambiguous, and adversarial in ways you can't anticipate.

Our approach: define the prompt contract (inputs, expected outputs, edge cases), write evaluation tests, and iterate the prompt against real data before it touches production.

The reliability problem

LLMs are non-deterministic. The same input can produce different outputs. For consumer chatbots, this is fine. For business-critical workflows — invoice processing, medical record summarization, compliance checks — it's a serious engineering challenge.

We solve this with structured outputs (JSON mode, function calling), validation layers, confidence scoring, and human-in-the-loop fallbacks. Every AI feature we ship has a clearly defined failure mode and a graceful degradation path.

Cost management is architecture

AI API costs can spiral fast. We've seen companies spend $50K/month on API calls that could be reduced to $5K with better architecture. Caching, prompt optimization, model selection (not everything needs GPT-4), and batching are engineering decisions, not afterthoughts.

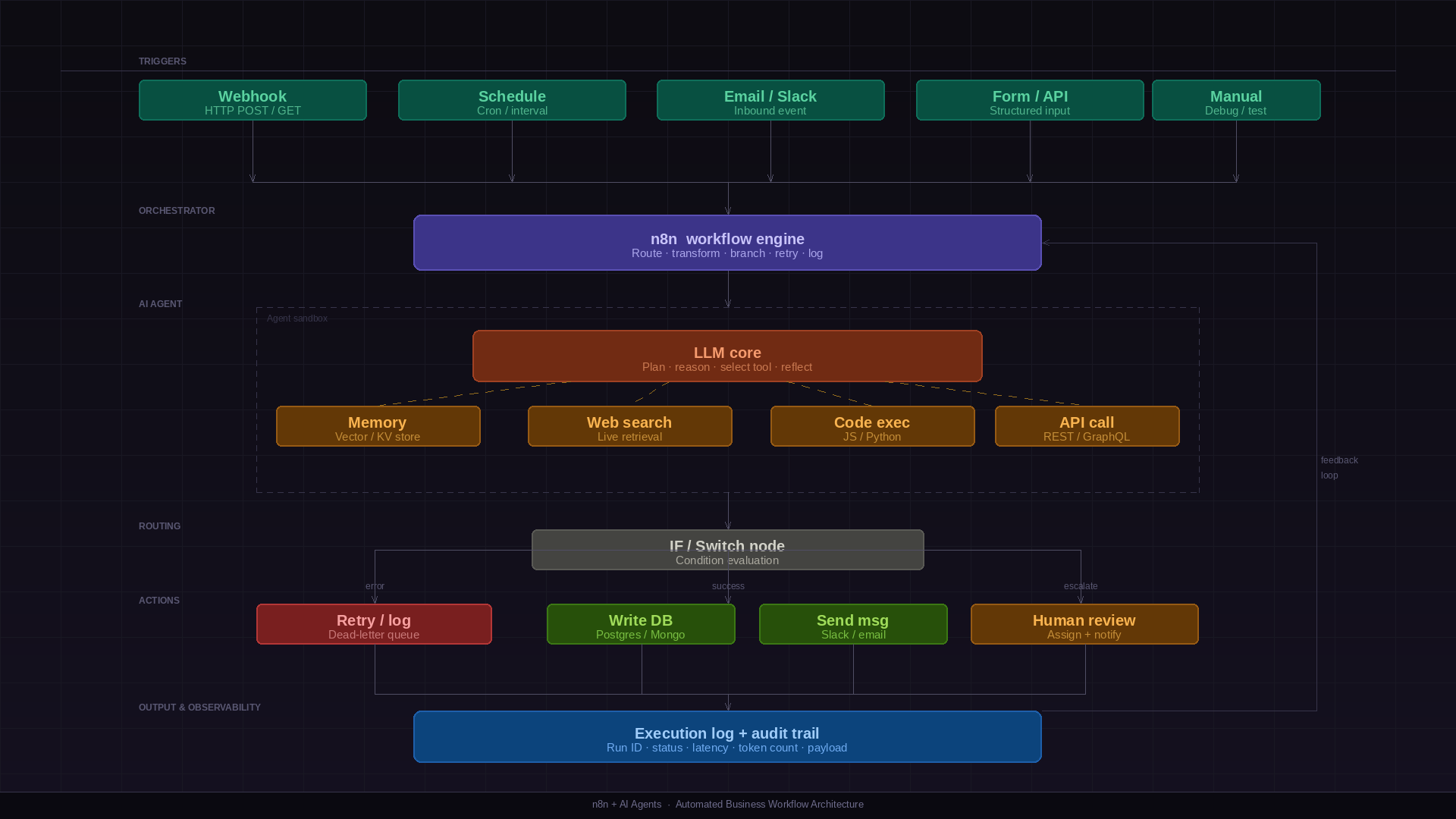

What we actually build

These are the AI features we ship most often. Not demos — production systems with SLAs, real user load, and zero tolerance for hallucinations in the wrong places.

📄 Document processing & extraction pipelines

Structured data extraction from invoices, contracts, and forms. We use function calling and validation layers to guarantee output schema — not just "try to parse it."

🔍 Intelligent search & retrieval systems

RAG pipelines over internal knowledge bases, support docs, and product catalogues. The hard part isn't the embedding — it's chunking strategy, re-ranking, and knowing when to say "I don't know."

🗂️ Automated categorization & routing

Support ticket triage, lead scoring, content tagging. High-volume, low-latency, with confidence thresholds that route edge cases to humans rather than guessing.

💬 Natural language interfaces for complex data

Text-to-SQL, conversational dashboards, and plain-English query layers over structured datasets. The UX challenge is managing user expectations when queries fall outside scope.

✍️ Content generation with human review workflows

First-draft generation for product descriptions, outreach copy, and reports — always with a review step baked in. We don't ship AI content pipelines without a human checkpoint.

None of these are glamorous demos. They process thousands of requests daily and are held to the same reliability bar as the rest of your infrastructure.

The honest truth

AI is powerful, but it's not magic. The most successful features we've shipped are narrow in scope, well-validated, and designed with clear failure modes. They automate the tedious 20% of a workflow that users were already doing — invisibly, reliably, without fanfare.

The features that fail are the ones chasing the demo. "It's like having a senior analyst on call" — then it hallucinates on the third query and the user never comes back.

If you're adding AI to your product, start with a specific, measurable workflow problem. Not a vision of intelligence. A step your users currently do manually that they'd rather not.