Cloud spend is the engineering cost that finance notices first and engineering teams understand last. By the time someone escalates the bill, the waste has usually been running for months.

We've audited cloud infrastructure for dozens of clients. The pattern is consistent enough to be depressing: companies overspend by 40–60%, the causes are almost always the same, and most of it is recoverable in weeks — not months.

The common culprits

Over-provisioned compute is the biggest offender. Teams provision for peak load and run at 15% utilization 90% of the time. Unused resources — development environments left running, forgotten load balancers, unattached storage volumes — add up fast.

Then there's data transfer costs, which nobody budgets for until the first surprising bill.

Right-sizing compute

Step one is always measuring actual utilization. We deploy monitoring (CloudWatch, Datadog, or Prometheus) and collect two weeks of real usage data before making any changes.

Most applications can drop one or two instance sizes without any performance impact. A client running m5.2xlarge instances across their fleet saw zero performance degradation after moving to m5.xlarge — saving $3,200/month.

Reserved capacity and savings plans

For stable workloads, committing to 1-year reserved instances or savings plans typically saves 30-40%. We model the commitment against actual usage patterns to avoid over-committing.

For variable workloads, spot instances (with proper fault tolerance) can save 60-80%. We use them for batch processing, CI/CD runners, and development environments.

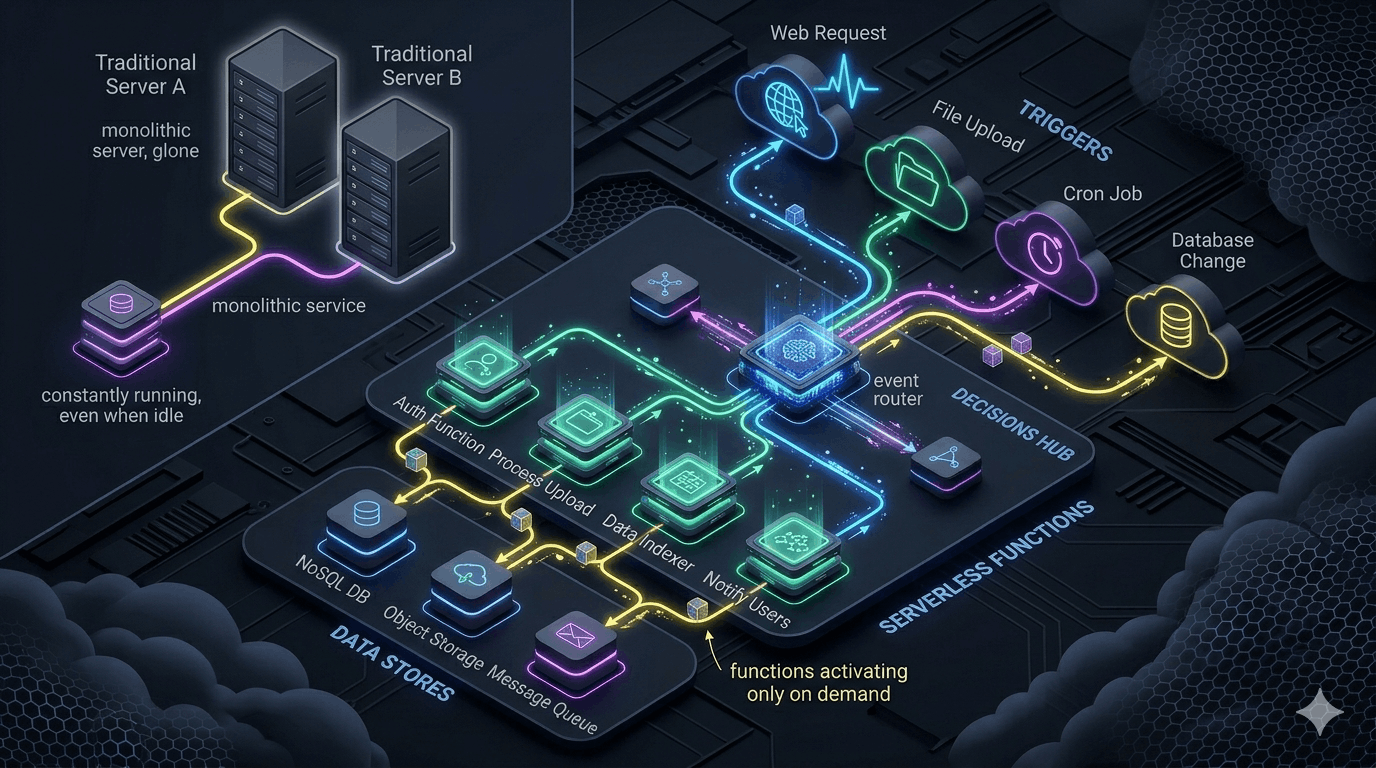

Architecture-level optimization

The highest-impact optimizations are architectural. Moving from always-on servers to serverless for bursty workloads. Implementing proper caching to reduce database load. Using CDNs for static assets instead of serving them from compute instances.

One client reduced their monthly cloud bill from $18K to $6K by adding a Redis caching layer and moving static assets to CloudFront. The engineering effort was about two weeks.

The playbook

Our standard optimization engagement follows a repeatable process. Here's the full sequence, and roughly where the savings come from at each stage:

| # | Step | What we do | Typical savings |

|---|---|---|---|

| 01 | Spend audit | Map every dollar to a service, team, and environment | — |

| 02 | Utilization measurement | 2 weeks of real CPU/memory/network data before touching anything | — |

| 03 | Quick wins | Delete unused resources, right-size over-provisioned instances | 10–20% |

| 04 | Reserved capacity | Commit stable workloads to 1-year savings plans | 30–40% on committed spend |

| 05 | Architectural changes | Caching, CDN offload, serverless for bursty workloads | 20–50% (highest impact) |

| 06 | Cost monitoring | Anomaly alerts, budget thresholds, tagging by team/env | Prevents drift |

| 07 | Quarterly review | Revisit as usage patterns change | Ongoing |

Steps 1 and 2 are non-negotiable. We've seen teams skip straight to "just move to spot instances" and end up saving 15% while leaving 40% on the table because they never addressed the architectural inefficiencies underneath.

Monitoring and governance

Optimization isn't a one-time project — it's a discipline. Without visibility, costs drift back within a quarter. We set up anomaly alerts, budget thresholds, and tagging policies so teams can track spend by project, environment, and team. Cost becomes a metric you watch weekly, not a line item that shocks you at the end of the month.

The goal isn't the lowest possible cloud bill. It's the right cloud bill — where every dollar maps to a system that's earning its keep, and surprises are caught in hours, not discovered in the next board deck.